The Future of AI in Mobile Apps: 7 Trends That Will Define 2026 and Beyond

Share

Everyone told us AI would kill apps.

The numbers say the opposite.

App releases across the App Store and Google Play are up 60% year-over-year in Q1 2026. In April 2026 alone, new app launches are up 104% compared to the same month last year. Generative AI mobile apps generated $3 billion in revenue in 2025 — a 273% year-over-year increase — making it the fastest-growing segment in the entire mobile ecosystem.

At Moonstack, we have been providing website development services since 2014 — for startups raising their first round, for enterprises serving millions of users, and for companies like EnKash, Nuvama Wealth, and Pickright.

This is not a trend report assembled from press releases. This is what we are actually seeing on the ground, backed by the freshest data available, and written for business owners, founders, and product teams who need to make real decisions — not just read about AI in the abstract.

Why 2026 Is the Year That Changes Everything for Mobile AI

Before we get into the trends, it is worth understanding the scale of what is happening.

The global mobile app development market stands at $305 billion in 2026 and is projected to reach $618 billion by 2031. The AI-specific segment within that market — the portion of apps where AI is a primary feature or architectural component — is estimated to reach $221.9 billion by 2034, growing at a CAGR of over 31%.

These are not projections about some distant future. They are describing money being spent right now.

What has changed is not just the technology — it is what users expect. Three years ago, a mobile app with AI-powered recommendations felt impressive. Today, users expect their apps to know them, adapt to them, and anticipate what they need before they ask. 70% of mobile apps now use AI features to improve user experience, and 63% of mobile developers are actively integrating AI into their builds.

The apps that are losing ground in 2026 are not the ones with bad design or slow load times (although those still matter). They are the apps that feel static. The ones that show every user the same interface, the same content, the same flow — regardless of who that user is or what they have done before.

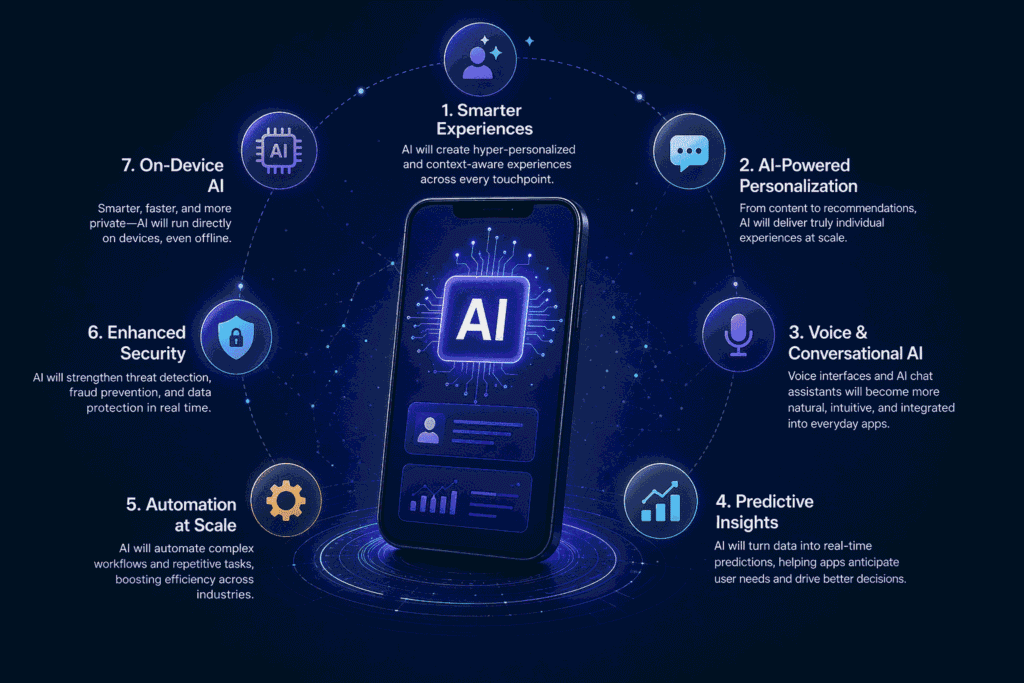

The 7 trends below are how the best apps are fixing that.

Trend 1: AI-Native Architecture — Building Around Intelligence, Not On Top of It

There is a fundamental difference between an app that has AI features and an app that is built on AI.

Most apps built before 2023 were designed as traditional software products first. Screens, navigation flows, databases, APIs — all decided and built. Then, at some point, someone asked: “Can we add AI?” What followed was an integration layer bolted on top of an existing structure. It worked, sometimes well, but it created ceilings. The architecture was not designed for intelligence. It was designed for instruction-following.

In 2026, that approach is creating real technical debt — and real product disadvantages.

AI-native apps are architected differently. The data pipeline, the model layer, the personalisation logic — these are first-class citizens in the design, not afterthoughts. The product team starts by asking: “What does this app need to know about each user, and when does it need to know it?” — before a single screen is wireframed.

The impact of this shift is measurable. Gartner data shows that 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025. That is an 8x increase in a single year.

What this looks like in practice:

In an AI-native fitness app, the onboarding flow does not ask users a series of questions and then sets a static programme. Instead, it observes the user’s behaviour across the first three sessions — how long they rest between sets, which exercises they skip, what time of day they train — and begins adapting the programme automatically. No survey required. No manual settings. The app just learns.

In a fintech app development with AI-native architecture, the transaction dashboard does not show the same view to every user. A user who checks their balance every morning gets a different home screen than a user who only opens the app to make transfers. The app surfaces what each person actually uses, based on what it has observed, not on what a designer assumed they would want.

What this means if you are building or rebuilding an app in 2026:

The most important architectural question is no longer “what database should we use?” It is “what data do we need to collect from day one, and how do we structure it so the AI layer can actually use it?” If you are building from scratch, this is the conversation to have in week one. If you are upgrading an existing app, this is the audit to run before you add any AI features — because adding intelligence to a poorly structured data foundation produces poor intelligence.

At Moonstack, when we begin a new app project today, the first technical conversation is about the data model and the AI layer. That shift happened in the last 18 months, and it is now standard across every project we take on.

Trend 2: Hyper-Personalisation — Every User Gets Their Own Version of Your App

The word “personalisation” has been in mobile app marketing for a decade. But what it meant in 2019 — showing a user their name in a push notification, remembering their last search — is almost embarrassingly basic compared to what it means in 2026.

Hyper-personalisation in 2026 means the app adapts in real time across every layer of the experience: the content, the interface layout, the notification timing, the pricing offers, the onboarding sequence, the feature discovery path, and even the tone of in-app copy. Two users who download the same app on the same day can end up with experiences that look and feel like different products within a week.

The business case for this is not subtle. Apps using AI-powered personalisation report:

- Up to 62% higher engagement rates compared to non-AI apps

- Up to 80% higher conversion rates on in-app purchases and upgrades

- Up to 15% lower churn in the first 90 days

- 10–30% higher ARPU (average revenue per user) through personalised pricing and offer timing

- 3x higher open rates on smart, context-triggered push notifications vs. broadcast notifications

- 35% increase in app opens driven by personalised content suggestions

These numbers are not from theoretical models. They are from live apps with real users where AI personalisation was A/B tested against static experiences.

The data that powers this:

Hyper-personalisation is only as good as the data that feeds it. In 2026, apps are drawing on several streams simultaneously: in-app behavioural signals (what users tap, scroll past, ignore), zero-party data (preferences users actively share), first-party transaction data, time and location context, and increasingly, sensor data from wearables and device hardware. The apps that combine these streams thoughtfully — not just volumetrically — are the ones producing genuinely useful personalisation rather than creepy surveillance.

A real example from our work:

When we built the investment advisory features for Pickright, the core design question was: how do you surface the right stock ideas to the right user at the right moment, from a universe of thousands of potential recommendations? The answer was a personalised recommendation engine that weighted suggestions based on each user’s stated risk profile, their portfolio composition, the sectors they had historically engaged with, and current market conditions. Two users with similar portfolios but different trading histories see meaningfully different recommendation feeds. That is hyper-personalisation working as it should — not as a marketing trick, but as a product that genuinely gets smarter about each person over time.

Trend 3: On-Device and Edge AI — Smarter, Faster, and Private by Default

For the first two decades of the smartphone era, “intelligence” in mobile apps meant sending data to a server, processing it in the cloud, and returning a result. The fast internet made this invisible most of the time. But it created a structural dependency: the app’s intelligence required connectivity, and every intelligent interaction left a data trail on a remote server.

Edge AI — running machine learning models directly on the device, without a round-trip to the cloud — is changing that in 2026, and it matters more than most people realise.

Why on-device AI is a genuine competitive advantage right now:

Speed. On-device inference is orders of magnitude faster than cloud inference for the same task. When a user types a search query in your app, on-device AI can begin predicting their intent before they finish the second word. Cloud AI introduces latency that, even at 200ms, breaks the feeling of real-time responsiveness.

Privacy and trust. Apple’s Core ML and Google’s on-device Gemini integration have made privacy-preserving AI a first-class feature of both major platforms. Users in 2026 are more privacy-conscious than ever — especially in India, where data sovereignty is an increasingly active regulatory and cultural conversation. An app that processes sensitive data (health metrics, financial transactions, location history) on-device, never sending it to an external server, can credibly say: your data never leaves your phone. That is a material trust advantage.

Offline capability. India has over 4.69 billion smartphone users projected across the region, millions of whom are in Tier 2 and Tier 3 cities with inconsistent 4G coverage and emerging 5G penetration. An app that functions intelligently offline — not just for basic tasks but for AI-powered features — captures users that cloud-dependent apps lose. This is not a minor market. It is a massive one.

Compliance. GDPR in Europe and India’s own data protection regulations are increasingly hostile to unnecessary data collection and transfer. On-device AI is not just a performance choice. For many app categories — healthcare, finance, education — it is becoming the only architecturally defensible choice.

What we are building with edge AI at Moonstack:

On recent app builds, we have integrated on-device models for real-time language processing (search and query prediction), biometric authentication that processes locally, camera-based features that work without connectivity, and lightweight recommendation models that run on-device for speed while syncing with a cloud model for deeper updates. The user never sees the complexity. They just notice the app feels faster and more responsive than the alternatives.

Trend 4: Multimodal AI Interfaces — Apps That See, Hear, and Understand Together

Most apps today understand one thing at a time. You type a query, or you tap a button, or you take a photo — and the app responds to that single input. That is changing.

Multimodal AI is the ability to process multiple input types simultaneously — text, voice, images, video, sensor data — and generate a unified, contextually aware response. This is not a laboratory capability in 2026. It is available in production, powered by models like Google’s Gemini (deeply embedded in Android) and Apple’s on-device AI frameworks (deeply embedded in iOS).

What multimodal AI enables in real apps:

In a real estate app, a user points their phone at a building. The app simultaneously identifies the structure from the camera feed, checks the user’s location, and pulls listing data — all before the user has said or typed a single thing. They did not search. They just looked.

In a healthcare app, a user photographs a skin condition and says “this appeared three days ago, is it getting worse?” The multimodal AI processes the image for visual analysis, understands the time context from the spoken query, and returns a structured response that combines visual pattern recognition with symptom guidance — all in seconds.

In a retail app, a user takes a photo of a pair of shoes they saw on the street. The app identifies the brand, style, and finds the closest available options in the user’s size and budget. No typing. No browsing. Just showing.

In an enterprise logistics app, a warehouse worker photographs a damaged item while simultaneously speaking a description of the damage. The app creates a structured incident report from both inputs simultaneously — cutting a 10-minute process to 30 seconds.

Why this matters for app design:

Multimodal AI changes the interaction model fundamentally. The best mobile interfaces of 2026 are not just better touch screens — they are ambient systems that understand context from multiple signals at once. Design teams working on multimodal apps need to think about UX in new ways: what does “input” mean when users can simultaneously show, speak, and gesture? At Moonstack, our UI/UX team has begun approaching multimodal features as interaction systems rather than individual features — mapping the full range of input combinations users might reach for, and designing flows that handle them gracefully.

Trend 5: AI-Powered Voice and Conversational UX — Your App as a Thinking Partner

The early wave of voice assistants — Siri, Google Assistant, Alexa circa 2017–2020 — set expectations low. They could set timers, play music, and answer basic factual questions. When they failed, they failed loudly with “I’m sorry, I didn’t catch that.” Users learned not to trust them for anything complex.

That era is over.

In 2026, conversational AI in mobile apps understands context that spans entire sessions, remembers prior interactions, handles ambiguity, understands domain-specific vocabulary, and can take autonomous multi-step actions on behalf of the user. The difference between a chatbot from 2020 and a conversational AI interface from 2026 is roughly the difference between a vending machine and a knowledgeable colleague.

The numbers tell the engagement story clearly:

- Voice-based interactions produce 2x longer engagement per session than typed interactions for equivalent tasks

- AI-powered conversational support reduces customer service costs by up to 40% while maintaining 24/7 availability

- Apps with context-aware AI assistants see 40% lower abandonment rates when users encounter friction in a flow

What this looks like in a real app:

In a food delivery app with conversational AI, a user says: “Order my usual, but no onions this time, and make it arrive before 1 — I have a meeting.” The AI parses the intent (usual order modification + delivery deadline), confirms the adjustment, places the order, and sends a proactive notification at 12:45 if there is a delay risk. The user never opened a menu. They never tapped a filter. They just spoke a sentence.

In an enterprise SaaS app, a sales manager says: “Show me accounts in the Rajasthan region that haven’t been contacted in 30 days and have a deal value above 5 lakhs.” The conversational AI translates this natural language query into a filtered data view, displays it inline, and offers to draft follow-up email templates for each account. What used to require three separate navigation steps and a filter UI now happens in one sentence.

The key shift: from reactive to agentic

The most significant development in conversational AI for apps is not better voice recognition — it is the shift from reactive responses to agentic action. Old conversational interfaces responded to commands. New ones complete goals. The user states an outcome they want; the AI figures out the steps to achieve it. This is the architectural difference that makes 2026’s voice interfaces feel qualitatively different from anything that came before.

At Moonstack, we have integrated conversational AI layers into fintech and e-commerce apps for clients, and the impact shows up in two places consistently: support ticket volume drops significantly within the first month post-launch, and session length increases because users discover features through conversation they would never have found through navigation.

Trend 6: Predictive Analytics and Smart Automation — Apps That Know What You Need Before You Ask

There is a version of personalisation that is reactive — it learns from what you have done and shows you more of it. Then there is a version that is predictive — it models what you are likely to do next and prepares the experience before you arrive.

The best apps in 2026 are built on predictive intelligence, not just responsive intelligence.

What predictive AI does in a mobile app:

It does not wait for a user to churn — it identifies the behavioural signals that precede churn (a user who used to open the app daily starts opening it every three days, then once a week) and triggers an intervention before the user leaves. It does not wait for a user to ask for help — it identifies where in a flow they are about to get stuck (based on aggregate patterns from thousands of prior users) and surfaces contextual guidance proactively. It does not wait for a user to search for a product — it predicts based on seasonal patterns, past behaviour, and contextual signals what they are likely to want this week, and surfaces it on the home screen.

The metrics behind this are compelling:

- Apps with predictive personalisation see 30% higher retention rates over a 90-day window

- Context-aware, predictive push notifications achieve 3x higher open rates than broadcast notifications

- Predictive churn models reduce early-stage abandonment by 40% when paired with proactive in-app interventions

- AI-powered recommendation engines in e-commerce apps increase average order value by 10–50% compared to manual curation

A concrete example from our client work:

For EnKash, we built a predictive spend analytics layer that analysed corporate transaction patterns to forecast cash flow needs before month-end. The product did not wait for a finance manager to run a report — it surfaced a proactive alert: “Based on your payment schedule and current balances, you may face a liquidity gap on the 28th. Here are three options.” That is predictive AI doing exactly what it should: converting raw data into a useful, timely action that the user would not have taken on their own.

The EnKash platform went on to raise $23M — and the intelligent financial intelligence layer was a core part of what differentiated it in a competitive market.

Trend 7: Generative AI for Content and UI — Apps That Create, Not Just Display

Generative AI is the trend everyone is talking about, but most apps are still using it at the surface level — a chatbot, an image generator, an auto-complete field. The apps that will lead their categories in the next two years are using generative AI at a structural level, to produce content, interfaces, and experiences that are fundamentally dynamic rather than static.

The market context:

The AI app sector reached $16.5 billion in revenue in 2025, an 180% increase on the prior year. Consumer spending on generative AI apps is projected to exceed $10 billion in 2026, placing it among the most lucrative mobile categories on the planet. Generative AI is forecast to jump from #10 in global app downloads in 2025 to #4 in 2026 — ranking ahead of established categories like multimedia, design, and shopping.

These numbers describe a category that is moving from early adopter to mainstream. The users are already there. The question is whether the products are ready for them.

What generative AI looks like inside apps that are leading their categories:

In an education app, generative AI creates entirely new practice problems tailored to each student’s specific weak areas — not drawing from a pre-written question bank, but generating novel questions that test the exact skills the student needs to work on. Every student gets a curriculum that has never existed before.

In a content creation app, generative AI does not just assist with writing — it maintains a brand voice model for each user, generating copy that sounds like them, using their vocabulary patterns, their preferred sentence structure, their established tone. The output feels authored, not generated.

In a B2B SaaS app, generative AI produces personalised onboarding flows for each new user based on their role, their company size, and the specific features most relevant to their use case. A user who works in finance at a 500-person company gets a completely different onboarding experience than a solo founder using the same product. No human configures this. The AI reads the context and builds the path.

What generative AI means for app development itself:

This is the part many business owners are underestimating. Generative AI is not just changing what apps can do — it is changing how fast apps can be built. App releases are up 60–104% year-over-year in early 2026, and a significant portion of that acceleration is driven by AI-assisted development tools. At Moonstack, we now use generative AI tooling across our design and development sprints — cutting prototype-to-approval cycles from weeks to days. Our clients get more design iterations, more thoroughly tested flows, and faster time-to-market. That is not a side benefit. For a startup racing to launch, that acceleration can be the difference between reaching users before a competitor does and reaching them after.

What These 7 Trends Actually Mean for Your Business

Here is the honest version of what we tell clients when they ask about AI for their mobile app:

You do not need all 7 of these trends at once. Trying to implement every capability simultaneously produces bloated, unfocused products. The right approach is to identify which two or three trends have the most direct impact on your specific users’ biggest friction points — and build those first, properly.

The three questions that determine your priority:

- Where are your users dropping off today? If churn is the problem, predictive analytics and conversational AI for support are your first investments.

- What does your app know about each user — and is it actually using that knowledge? If the answer is “not much” or “not really,” AI-native architecture and a proper data pipeline are the foundation to build before anything else.

- What would make your app feel like it was built specifically for each person who uses it? The answer to that question is your personalisation roadmap.

Industries where we are seeing the fastest ROI from AI integration right now:

Fintech and payments: Predictive cash flow, conversational support, fraud pattern detection. Apps that add these capabilities are seeing measurable improvements in user trust and daily active usage.

Healthcare and wellness: On-device AI for privacy-sensitive health data, multimodal input for symptom tracking, predictive alerts for health risks before they escalate.

E-commerce and retail: Hyper-personalised product feeds, visual search via multimodal AI, conversational commerce that replaces browse-and-filter with speak-and-find.

Real estate: Multimodal AR overlays for property viewing, AI-powered matching between users and listings based on behaviour rather than stated preferences alone, conversational search that understands qualitative descriptions (“something quieter, with good natural light, near a school”).

EdTech: Adaptive learning paths generated by AI, personalised content pacing, generative practice material that adjusts difficulty in real time.

The common thread across all of these is that AI works best when it is solving a specific, real problem a user has — not when it is demonstrating capability for its own sake.

The Bottom Line

The mobile app landscape in 2026 is not divided between apps that have AI and apps that do not. It is divided between apps that have built AI well and apps that are already losing users to the ones that have.

If you are planning a new mobile app — or wondering whether your existing app is keeping up — the conversation you need to have is not about features. It is about architecture, data strategy, and which AI capabilities will actually move the needle for your specific users.

Schedule a free strategy call with our team →

How much does it cost to add AI features to an existing mobile app?

It depends significantly on the type of AI features and the quality of your existing data infrastructure. Simple features — an AI chatbot, basic recommendation logic — can be integrated into an existing app for $15,000–$40,000 depending on complexity. More sophisticated features like real-time hyper-personalisation, on-device model integration, or full conversational AI typically range from $40,000–$120,000+. The most important cost driver is not the AI itself — it is the data pipeline work required to feed it. Apps with clean, structured user behaviour data integrate AI much faster and at lower cost than apps that need foundational data work first. [Get a free assessment of your current app’s AI readiness from Moonstack →]

Do I need to rebuild my app from scratch to add AI, or can I upgrade?

In most cases, no full rebuild is required. The practical answer depends on two things: the quality of your current data model, and the type of AI capabilities you want to add. Surface-level AI features (chatbots, basic personalisation, predictive search) can almost always be added to an existing app. Deeper AI-native capabilities — real-time adaptive interfaces, on-device ML models, complex recommendation engines — sometimes require architectural changes to the data layer that effectively mean rebuilding a significant portion of the backend. Our standard recommendation is a technical audit before deciding: understand what you have, what you want, and what the path between them actually looks like.

Is AI in mobile apps relevant for Indian businesses specifically, or is this a Western market trend?

It is profoundly relevant for Indian businesses — arguably more so. India is one of the fastest-growing markets for AI app adoption globally. The generative AI market in India is expanding its user base faster than any other region except the US. On-device AI addresses India-specific infrastructure realities around connectivity. And Indian users in every category — fintech, healthcare, e-commerce, agri-tech — have shown strong adoption of AI-powered mobile features when those features solve real problems at appropriate price points. If you are building a mobile app for Indian users in 2026, ignoring AI is not playing it safe. It is falling behind.

How long does it take to build an AI-powered mobile app?

A well-scoped AI-powered mobile app can be built in 1–4 months depending on complexity. A leaner MVP with two or three core AI features typically takes 3–4 months with the right team. The timeline is most affected by the data infrastructure work (which often takes longer than the AI feature work itself) and the iteration cycles required to tune AI models to real user behaviour. At Moonstack, we have delivered AI-integrated apps in as few as 10 weeks for clients with clear scopes and clean data.

Loading categories…